The Invisible Layer of AI: Why Everyone Is Suddenly Talking About Harness Engineering

AI models got better. The real bottleneck moved to everything around them

Everyone can feel that AI has become more capable. Far fewer can explain why similar models keep producing wildly different real-world results. That gap is pushing the industry toward a layer it talked around for a long time before it had a clean name for it: harness engineering.

The term may be new. The practice is not.

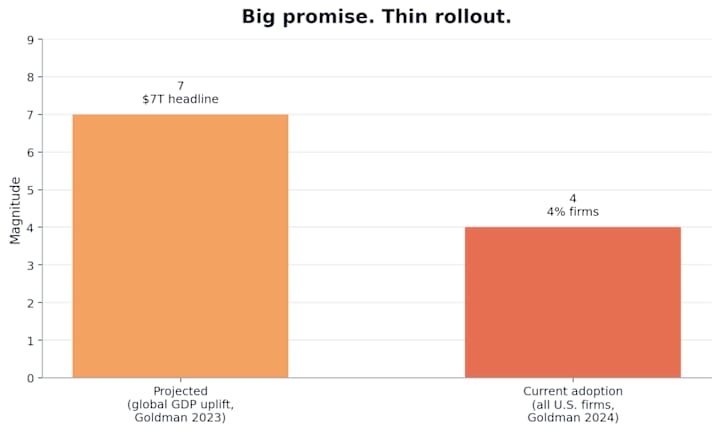

Big Promise, Thin Rollout

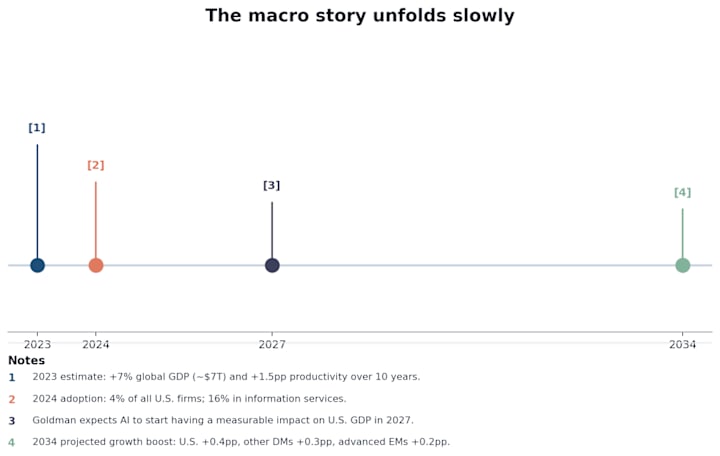

This is where the confusion starts. The macro claim is enormous. Goldman Sachs argued in April 2023 that generative AI could raise global GDP by 7%, or nearly $7 trillion, over a decade. The practical rollout, meanwhile, still looks early and uneven. By April 2024, Goldman said only 4% of U.S. firms had actually adopted generative AI. Even in information services, the figure was just 16%, with 23% expected within six months.

These numbers are not measuring the same thing. They are still telling the same story. The upside is obvious. The pickup is slow.

The money is on the table, but most teams still do not know how to pick it up. When adoption lags this hard behind capability, the missing piece is usually not just the model. It is the system layer around the model. The industry now has a name for that layer.

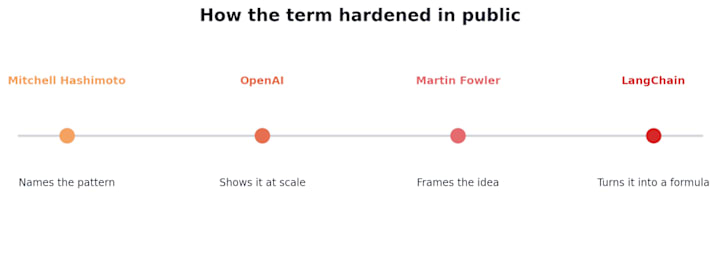

The Name Showed Up Fast

In February 2026, Mitchell Hashimoto gave the term its plainest and most useful framing:

When an agent makes a mistake, do not just rerun and hope.

Change the system so the same class of mistake stops coming back.

That same month, OpenAI published its own harness engineering essay and gave the phrase real weight. The numbers made it hard to dismiss: roughly one million lines of code and around 1,500 pull requests processed in five months, with the human role shifting away from writing application logic line by line and toward shaping the environment around the agent. After that, Martin Fowler's site gave the concept a firmer engineering frame, and LangChain compressed it into a slogan that was easy to repeat.

Once a slogan shows up, the whole market starts talking as if the thing itself just showed up too.

That is the mistake. The vocabulary moved fast. The behavior was already there.

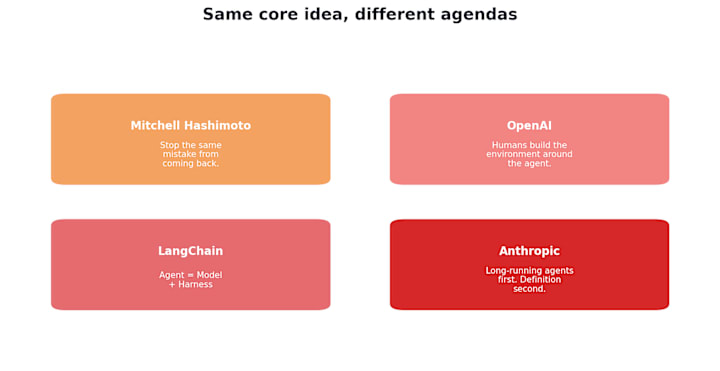

Same Core, Different Agendas

At the core, these people are describing the same shift. Around that core, though, each of them is pushing a slightly different agenda. Mitchell is speaking as a builder. OpenAI is describing what this looks like inside a scaled code-generation workflow. LangChain is doing what frameworks do best, compressing a messy practice into a memorable formula. Anthropic, characteristically, has often been more practical than terminological, doing the work before worrying too much about what to call it.

That is why the conversation sounds noisy. People are not really arguing over whether this system layer exists. They are emphasizing different pieces of it depending on what they want the term to do.

Prompt, Context, Harness

The cleanest distinction here is not abstract. It is operational. Ask what changed.

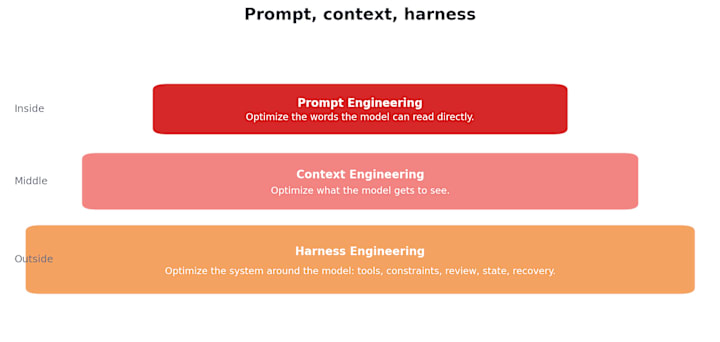

If you changed words the model can read directly, that is prompt work. If you changed what the model gets to see, that is context work. If you changed the invisible structure around the model, the tools, rules, review loops, memory, fallback paths, and execution boundaries that shape how the system behaves, that is harness work.

This is where people usually blur the categories. They see tools and call that the harness. They see MCP servers and call that the harness. They see a skill library and call that the harness. Not quite. Those are components. The harness is the assembled system.

You Were Probably Growing Into It Already

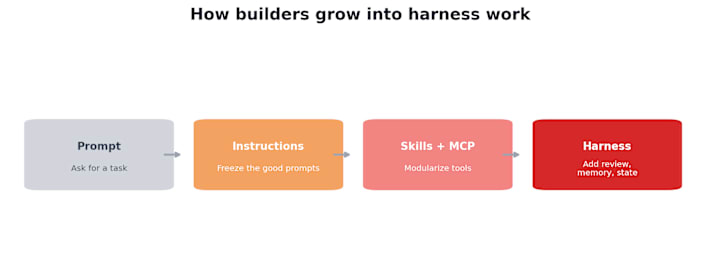

This is the part many discussions miss. The new phrase makes the practice sound younger than it really is. In reality, most builders who spend enough time with AI systems go through the same progression whether they have the vocabulary for it or not.

At first, everything looks like prompt engineering: write the function, fix the bug, return valid JSON. Then the same prompts keep recurring, so they harden into reusable instructions. As tasks get messier, more layers start accumulating around the model: tools, skills, MCP servers, retrieval, files, state, and memory. Then the system has to run longer, sometimes for hours, sometimes for days, and the outer structure begins to grow whether you planned for it or not: permissions, logs, human review, recovery, feedback loops, identity, and evaluation.

Once you look back from that point, the pattern becomes obvious. You did not suddenly discover a brand-new discipline. You kept adding structure around the model until the system stopped behaving like a toy. The work came first. The label came later.

Why the Name Arrived Now

Two years ago, the model itself was still the biggest bottleneck. If the model could not hold structure, stay consistent, or finish longer tasks reliably, there was only so much leverage to get from the outer layer. The horse was not ready for the harness.

Now it is, or at least ready enough that the bottleneck has moved. Give the same frontier model to two teams and you can still get completely different outcomes. One ships. One stalls. The model is the same. The difference is everything around it.

Goldman Sachs ends up telling the same story from the outside of the economy. The capability jump happens first. The broad payoff lags behind. Goldman expects AI to begin making a measurable contribution to U.S. GDP in 2027, not instantly, and projects the bigger growth effects further out.

That lag does not mean the models are unimportant. It means native model capability still has to pass through a systems layer before it turns into durable, repeatable productivity. That layer is exactly what people are now trying to define.

One Last Filter

So the next time someone says harness engineering, ignore the sophistication of the phrase for a second and ask one simpler question:

Can the model see the thing you changed?

If yes, that is probably prompt work. If no, but it changes what the model receives, that is context work. If the model does not even know the change exists, that is real harness work.

That is why the term matters. Not because it points to something brand new, but because it finally gives the industry a clean label for a layer builders had already been constructing long before the market agreed on the words.

Sources

Goldman Sachs, "Generative AI could raise global GDP by 7%," April 5, 2023.

Goldman Sachs, "AI may start to boost US GDP in 2027," November 7, 2023.

Goldman Sachs, "The US labor market is automating and becoming more flexible," April 25, 2024.

Mitchell Hashimoto, "My AI Adoption Journey," February 5, 2026.

OpenAI, "Harness engineering: leveraging Codex in an agent-first world," February 11, 2026.

Martin Fowler, "Harness Engineering," February 17, 2026.

Vivek Trivedy, LangChain, "The Anatomy of an Agent Harness," March 10, 2026.

About the Creator

Jera Lam

AI practitioner. Data-driven. Steering clear of the hype to avoid the noise.

Comments

There are no comments for this story

Be the first to respond and start the conversation.